Personal computing discussed

Waco wrote:Turn settings down and breathe easy. Honestly, the obsession with "MAX ULTRA KILLAH SETTINGS" is somewhat pointless and a great way to spend a lot of money.

Waco wrote:Turn settings down and breathe easy. Honestly, the obsession with "MAX ULTRA KILLAH SETTINGS" is somewhat pointless and a great way to spend a lot of money.

End User wrote:The ability to maintain high settings is not a pointless endeavour. Sacrificing high settings to gain 4K is.

Waco wrote:End User wrote:The ability to maintain high settings is not a pointless endeavour. Sacrificing high settings to gain 4K is.

I didn't say it was pointless, I said the drive to run everything maxed out is.

"High" is relative. If you can run a game at medium settings and it still looks good to you, who cares if there's 19 more notches on the slider?

They're all arbitrary settings anyway. I'd much rather play 4K with a few details turned down than at 1080p with EVERUHTHANG MAXXXED.

End User wrote:The ability to maintain high settings is not a pointless endeavour. Sacrificing high settings to gain 4K is.

dragontamer5788 wrote:Low resolution with correct shadows, good antialiasing, nice edges and the like... even at 720p... is my preferred way of gaming. High-resolution helps, but if I notice bad shadows it really messes with my immersion. I guess low-resolution is kind of a controlled detonation. I'm used to low resolution so I've grown to accept it.

With that being said, "Uber" preset on like, the Witcher is a bit much for me and I don't really see a big difference. Antialiasing and Ambient Occlusion are big, so those need to be turned up for me.

pizza65 wrote:Waco wrote:Turn settings down and breathe easy. Honestly, the obsession with "MAX ULTRA KILLAH SETTINGS" is somewhat pointless and a great way to spend a lot of money.

Yeah that's fair. I think it seems harder than it used to be to work out what you can turn down without massive visual impact. Back in the day I'd just put everything on high and then turn down the Shadows setting until I got a high enough framerate, but the number of options available now makes it hard to tell what to disable.

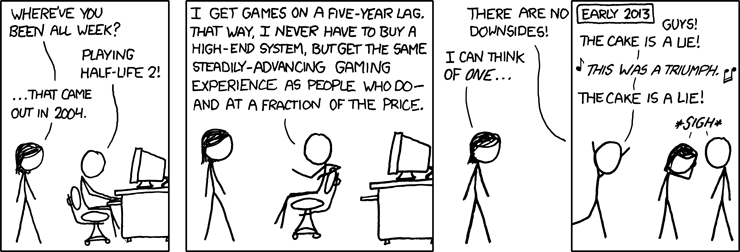

jihadjoe wrote:Playing games with a 5-year lag.

Aside from a handful that really have to be played online which have old engines anyway (Diablo III, SC2 and some fighting games), I'm mostly in it for the single player. Playing games with a 5-year lag not only makes them easier on the hardware, the games themselves are likely to be on sale and any issues at launch would have been patched out.

JustAnEngineer wrote:I'm pushing a 2560x1440 monitor at 144 Hz. If the frame rates drop off, that's what VESA adaptive sync is for.

pizza65 wrote:I realise I don't have the absolute fastest GPU out there but even so it's still comfortably in the top-end of modern hardware, and yet it seems like running much more than a bog standard 1080p monitor is unlikely to perform well.

Pancake wrote:pizza65 wrote:I realise I don't have the absolute fastest GPU out there but even so it's still comfortably in the top-end of modern hardware, and yet it seems like running much more than a bog standard 1080p monitor is unlikely to perform well.

Ahh, no. And that's your problem. Your GTX1060 is most decidedly pedestrian mainstream. Not top-end. You want to play in 4K you gonna want spend more for something high-end.

ptsant wrote:I'm profoundly bothered by a short view distance and objects popping in/out of view. I also hate aliasing.

Chrispy_ wrote:One thing that is often overlooked on high-refresh monitors is their improvement to vsync gaming; If you don't have a VRR-capable combination of monitor and GPU, then even a 144Hz monitor is a huge upgrade for a GPU that can't handle high-refresh gaming at that resolution.

Lets say your 1060 runs a game you want at 1440p at about 70fps, but the performance ranges from 40-100fps depending on the moment.

On a 1440p 60Hz monitor, every time your GPU can't meet 60fps, you screen repeats a frame, meaning a jarring drop to 30fps. It's at its very worst at 45fps because the animation jerks in and out of phase with the framerate, meaning that you end up with a perceived 15fps animation judder that completely ruins the 45fps experience; Even a constant 30fps experience would be much better than 45fps with animation smoothness issues.

On a 1440p 144Hz monitor, your GPU is never going to render at 144fps, but it'll spend almost half its time above 72fps which feels pretty smooth, and when it can't handle that it'll drop to 48fps which is still reasonable. The next quantisation means that if 48fps can't be met, 36fps are displayed too. Not only are 48fps and 36fps higher numbers than the 30fps on a 60Hz monitor, you also don't get animation phasing anywhere near as obviously on a high-refresh monitor because the ratio of frametimes at different frame rates is much closer

- 30fps dropping from 60Hz is a 1:2 ratio, meaning that the animation rate is (worst-case) 1/2 of the 30fps for the 15fps 'animation judder' I described.

- 36fps dropping from 48fps is a 3:4 ratio, meaning that the animation rate is (worst-case) 3/4 of the 36fps for a much smoother 27fps animation rate.

27fps doesn't sound great, but that's the worst case scenario and it's an 80% improvement in perceived smoothness, something you'll definitely notice. It basically means you can enjoy smoother gaming without tearing, like a partial VRR; Sure - it's not as smooth and analogue as G-Sync or Freesync, but you have adequate smoothness at an easier-to-drive 36, 48, 72Hz, rather than just a single 60Hz option which results in a bad experience if 60Hz cannot be met.

roncat wrote:I would also argue as resolution goes up, the need for AA goes away.

auxy wrote:GPU requirements for games are grossly overblown and overstated all the damn time. It's just not that hard to run games.

I used to play stuff at >100 FPS in 4K reso on a 4K60 monitor, and also in 3840x2160 @ 120Hz using Nvidia DSR on a 1920x1080 @ 144Hz monitor, which is MORE demanding than native 4K. That was with a GTX1080Ti. Now I have an RX 580 8GB and it actually plays tons of games just fine in 4K resolution. Lately I've been playing a lot of 20XX with RAGEPRO in co-op, and I play at 120 FPS using Radeon Enhanced Sync in 4K resolution using AMD VSR.

Just don't worry about it. You don't need SLI 1080s to play in 4K. It's just dumb the way people talk about this stuff. ( 一一)

Waco wrote:Pancake wrote:pizza65 wrote:I realise I don't have the absolute fastest GPU out there but even so it's still comfortably in the top-end of modern hardware, and yet it seems like running much more than a bog standard 1080p monitor is unlikely to perform well.

Ahh, no. And that's your problem. Your GTX1060 is most decidedly pedestrian mainstream. Not top-end. You want to play in 4K you gonna want spend more for something high-end.

No, not really. Gaming at 4K doesn't require a top end modern GPU, it just requires a few sacrifices on older cards.

My HTPC has a GTX 780 and a 4K screen. For coach gaming it's perfect with a little tweaking.

Pancake wrote:If you wanna game at all, you wanna game with da fruits. The quality levels dialled up all nice and high. Otherwise why bother?

synthtel2 wrote:Pancake wrote:If you wanna game at all, you wanna game with da fruits. The quality levels dialled up all nice and high. Otherwise why bother?

Because the eye candy isn't actually why I'm here. If it isn't still fun without the eye candy, it isn't actually a good game.